WE ARE HIRING ...

DeltaMax™: Intelligent Data Quality Monitoring Platform

Never Fly Blind Again.

DeltaMax™ is an advanced, AI-driven platform designed to monitor and safeguard enterprise data pipelines. Unlike traditional rule-based systems, it proactively identifies, analyzes, and helps resolve hidden data quality issues—even those you didn’t anticipate.

With DeltaMax, your organization gains continuous visibility into data health, enabling smarter decisions and reducing operational risk.

The Problem: When Data Pipelines Become Invisible

Modern businesses rely on data, but managing it has become increasingly complex. Data flows from multiple sources with different formats, volumes, and timings, making it hard to track and control.

Common challenges include:

- ● Sudden data changes that pass validation checks

- ● Inconsistencies during system migrations

- ● Excessive time spent investigating alerts

The Solution: DeltaMax™

DeltaMax™ provides intelligent visibility into your data ecosystem. Built for scalable environments, it uses adaptive machine learning to monitor, validate, and analyze data continuously.

It focuses on:

- ● Anomaly Detection

- ● Reliability of Data

Instead of just flagging issues, DeltaMax helps identify root causes—reducing investigation time and improving data trust.

Key Features

Anomaly Detection

Seeing Beyond Rules

Not all data issues are obvious. Many appear as small deviations—slight drops in volume, unexpected spikes, or subtle shifts in patterns. These often go unnoticed because they don’t violate predefined checks.

DeltaMax™ continuously observes how data behaves across sources and over time. It learns normal patterns and establishes a baseline for comparison. When data deviates from this baseline in a meaningful way, it is automatically flagged.

This eliminates the need to manually define every possible rule.

With this approach:

- ● Hidden issues are detected early.

- ● Only meaningful deviations are highlighted, reducing noise

- ● Teams gain a deeper understanding of data behavior across systems

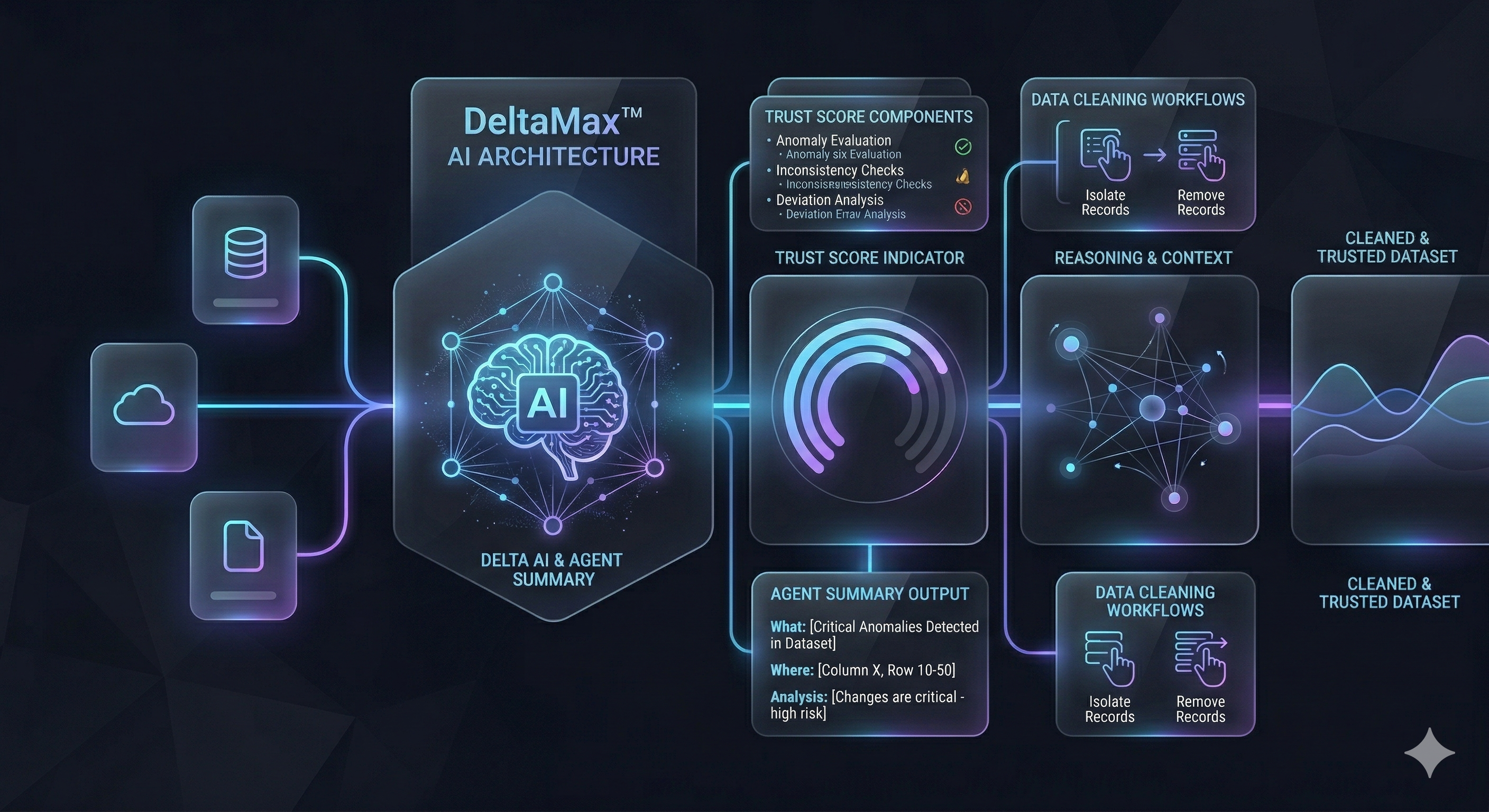

Trust Score , Agent Summary and Data Cleaning

In most systems, data issues appear as alerts—but alerts alone don’t tell you whether the data is still usable. A dataset might have minor changes or critical failures, yet both often look the same without deeper analysis.

DeltaMax™ addresses this gap by introducing two tightly connected layers of understanding.

The Trust Score acts as a real-time indicator of data reliability. It evaluates multiple factors such as anomalies, inconsistencies, and deviations from expected behavior, and converts them into a single, easy-to-understand score.

Alongside this, the Agent Summary provides contextual explanation. Rather than just highlighting that something changed, it explains:

- ● what exactly changed in the data

- ● where the change occurred

- ● whether the change is expected, minor, or critical

This combination removes ambiguity.

Teams no longer need to correlate multiple signals or run separate checks to understand impact. The system already connects the dots—linking detection with explanation and reliability.

Beyond detection and explanation, DeltaMax™ also enables data correction workflows. Once anomalies are identified and understood, the platform can isolate or remove problematic records, allowing teams to work with a clean, trusted dataset.

In practice, this means:

- ● Data health is no longer abstract—it’s measurable and visible

- ● Investigation effort is significantly reduced because context is built-in

- ● Decisions can be made faster, with clear awareness of risk and impact

Instead of working through layers of alerts and validations, teams interact with data in a more direct way—they see its condition, understand its impact, and act immediately.

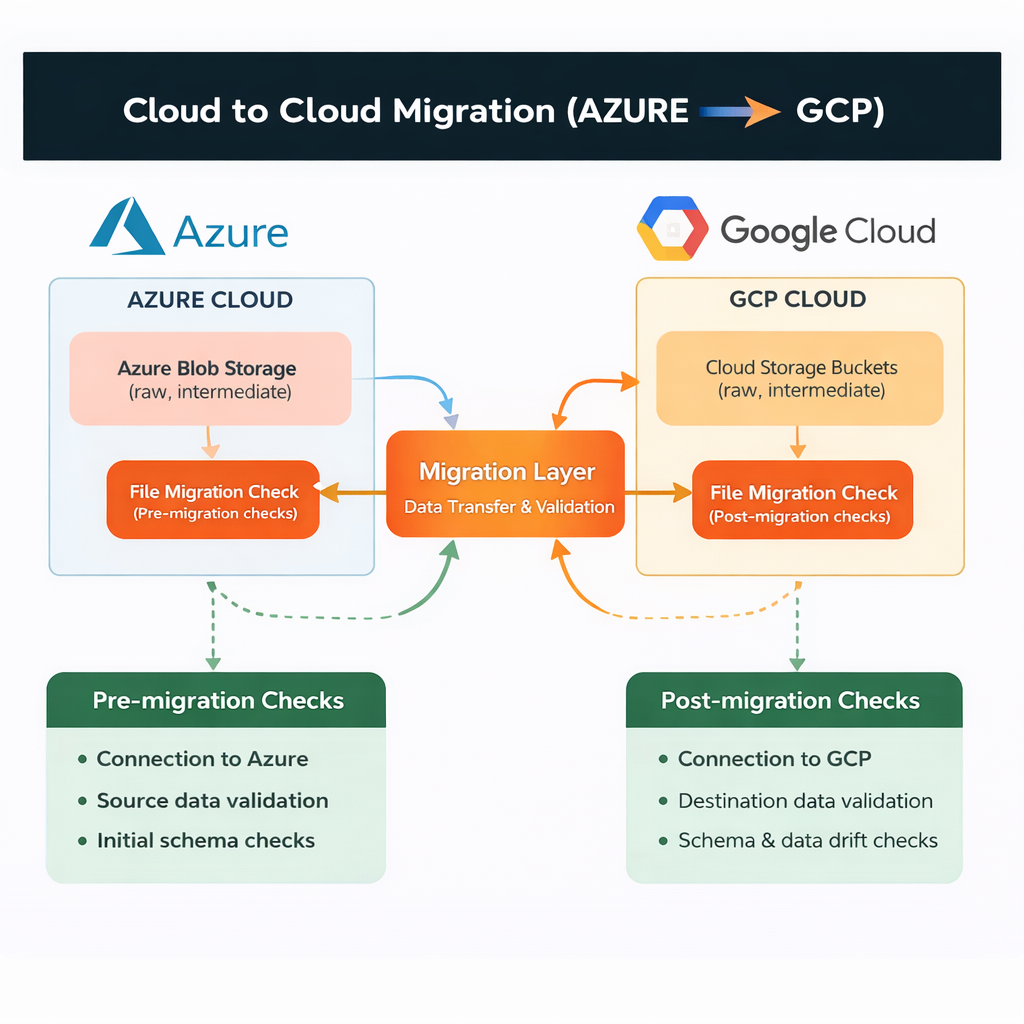

Importance of Data Quality in Cloud to Cloud Migration

Data migration may sound simple—just move data from one system to another. But in reality, it’s much more than that. The goal is not just transfer, but to make sure the data remains accurate, consistent, and usable in the new system.

If the data is already messy or inconsistent before migration, those issues don’t disappear—they grow. What starts as small errors can turn into broken reports, failed processes, and unreliable insights after the move. That’s why data quality is not a side task in migration—it’s the foundation of success.

What Can Go Wrong

Incorrect or incomplete data gets transferred, leading to wrong outputs in the new system. Business processes may stop working properly because they depend on clean and structured data.

How Data Quality Is Maintained

To avoid these issues, data quality needs attention at every stage of migration—not just at the end.

Before migration, it’s important to understand the structure of the data and identify any gaps or inconsistencies. During migration, the focus shifts to making sure the transfer is accurate. Data needs to be continuously monitored to ensure that what leaves the source system matches what arrives in the target system. After migration, the job is not over. The migrated data must be validated again to confirm everything is correct. Ongoing checks help ensure stability, so systems continue to run smoothly without hidden issues.

Deltamax Approach

We believe that reliable migration starts with reliable data. Instead of treating data quality as a one-time checkpoint, we approach it as a continuous process. Early detection helps prevent small problems from becoming major failures.

We focus on keeping data clean and consistent both before and during migration. Rather than relying only on predefined checks, we continuously monitor data to catch unexpected issues. Early detection is key—it helps prevent small problems from becoming major failures later.

The result is a smoother migration experience. Data moves accurately, systems remain stable, and teams spend less time fixing errors. Most importantly, organizations gain confidence in their data, knowing it can be trusted from day one in the new system.

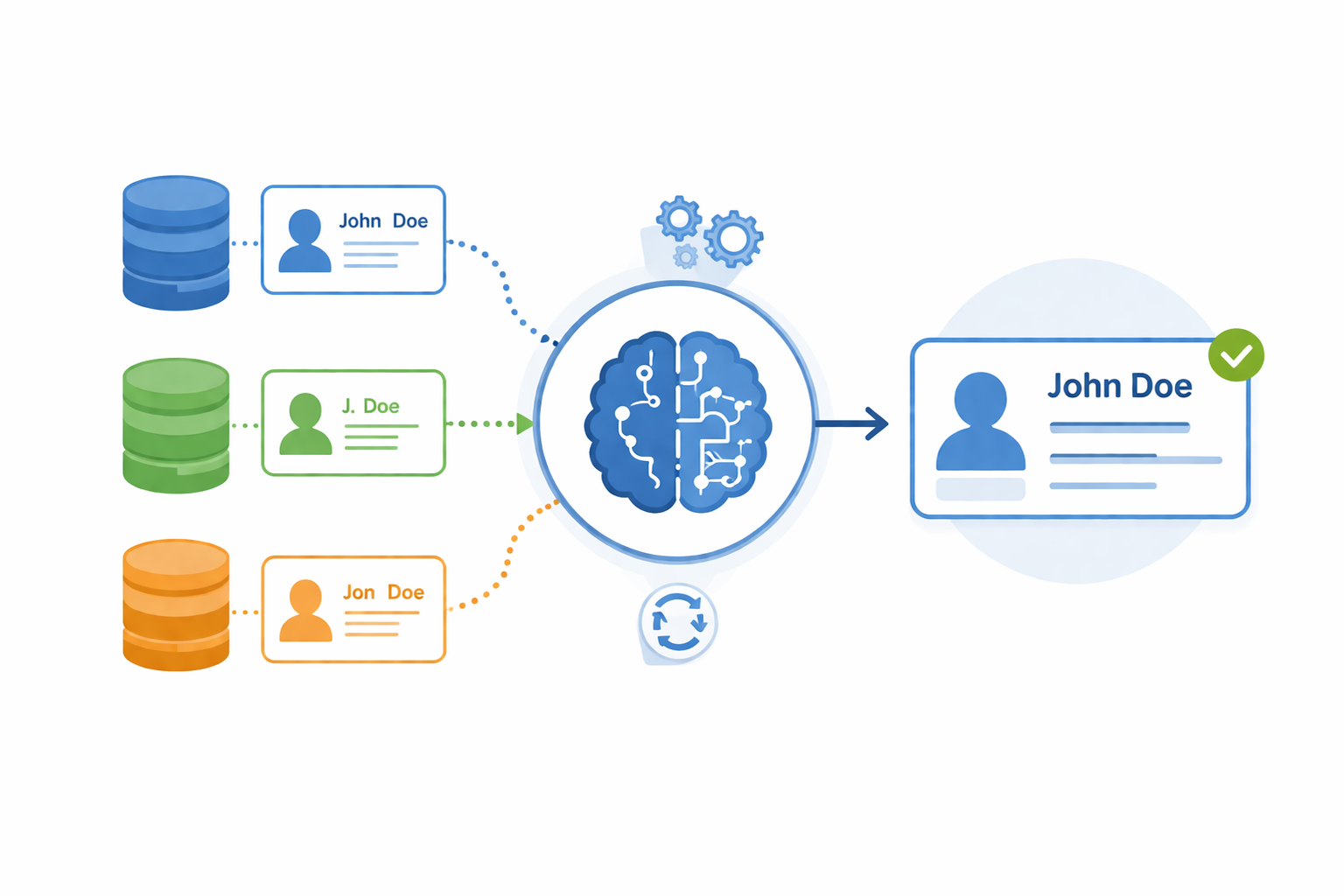

Master Data Management

One Entity. One Truth. Everywhere.

As data grows across systems, the same entity starts appearing in multiple forms—slightly different names, formats, or missing attributes. Over time, this leads to duplication, inconsistency, and confusion about which version is correct.

DeltaMax™ addresses this by bringing intelligence into how master data is identified and managed.

Instead of relying only on exact matches, it understands relationships between records—linking similar entries even when they are not identical. These records are then standardized and aligned into a single, consistent representation.

What this enables:

- ● A unified and consistent view of core entities across systems

- ● Elimination of duplicate and conflicting records

- ● Standardized formats and aligned data definitions

- ● Stronger control over how critical data is created and updated

By establishing a reliable foundation for master data, organizations move from fragmented records to a connected, trustworthy data ecosystem—where every downstream process operates on the same truth.

Why DeltaMax Wins Over Existing Solutions

DeepSeek Evaluation

DeepSeek’s assessment focuses on DeltaMax’s strength in data migration and reliability, especially within cloud ecosystems. It highlights that DeltaMax is not just moving data, but actively ensuring that the data remains accurate and trustworthy throughout the migration process. According to the evaluation, DeltaMax stands out because it uses a multi-check approach, validating data at different stages instead of relying on a single verification step.

The key takeaway from DeepSeek’s perspective is that DeltaMax delivers a structured and reliable pipeline that ensures high-quality data reaches the destination system. This makes it particularly effective for complex migrations where maintaining data integrity is critical.

DeltaMax is recognized as a purpose-built solution for data migration, ensuring data accuracy through multiple validation layers and delivering trusted data in the target system.

Link: https://chat.deepseek.com/share/hjhebmro4ste4x3muw

Claude Evaluation

Claude’s evaluation presents a broader view, positioning DeltaMax as a comprehensive data quality and reliability platform. It emphasizes that DeltaMax goes beyond just detecting issues by providing a complete understanding of data health. This includes capabilities like identifying anomalies, measuring trust through scoring, and analyzing the root cause of problems.

Another important aspect highlighted is DeltaMax’s ability to support organizations at scale. With features such as continuous monitoring, enterprise readiness, and integration with advanced analytics, it ensures that data remains consistent and reliable across different systems over time.

Claude also recognizes DeltaMax’s strength in combining multiple capabilities into a single platform, reducing the need for multiple disconnected tools.

DeltaMax is identified as a full-scale data reliability platform that not only detects issues but explains them, measures trust, and supports long-term data consistency across the enterprise.

Link: View Full Assessment

- Get Started

- Careers

- Contact Us

- Updates

- Blogs